Note: I’ve been sitting on this idea for about two years, thinking about turning it into a concrete research programme. Since this seems unlikely to happen anytime soon, I figure I might as well share it and invite others to build on the ideas here.

What do we mean by ‘AI’? Can we do better than the agent metaphor?

In common discourse, AI is fuzzy. Like ‘the digital cloud’, it points to a vague cluster of things but not one specific technology. When AI is more precisely formulated, it is often formulated as an agent (See eg Bostrom 2012), or else a collection of modules (Fodor, 1983)1. I think this misses something important about the things we care about when we talk about AI.

‘Agent’ to me implies a bunch of things (e.g. utility function, instrumental rationality, goals) that restrain our imagination for the type of thing AI can be. I get the image of some disembodied unified actor (Clippy, Terminator) that is fundamentally separate from us. Does this have to be the case? Can we broaden our horizon of imagination?

A good analogy (and theory2) of AI should give us better grip for doing conceptual work with AI. It should let us do things like distinguish between different types of AI (which the agent analogy has hitherto been largely unhelpful for), and make legible the context we have about AI. We want to make visible all the things that are currently invisible in our discussion of AI agents (e.g. training data, inference algorithm, employees).

AI along the axis of persuadability

If we take biology seriously, there is no clear line to be drawn between ‘agent’ and ‘non-agent’.

At what point do we have ‘real’ preferences/goals — when our umbilical cord formed? When we solved our first math problem? Also, do frogs have it? What about amoeba?

Michael Levin makes this case and presents one way to specify a continuum of agency3. He calls it the axis of persuadability.

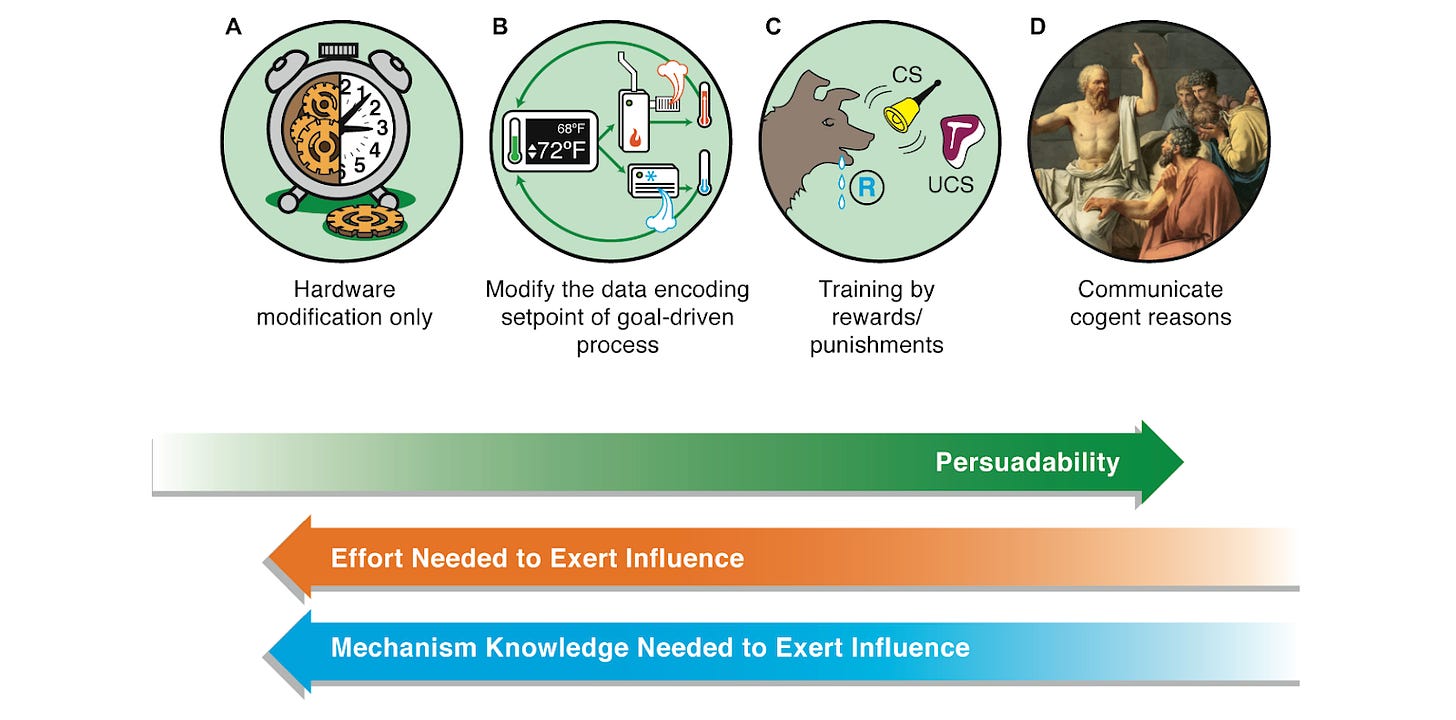

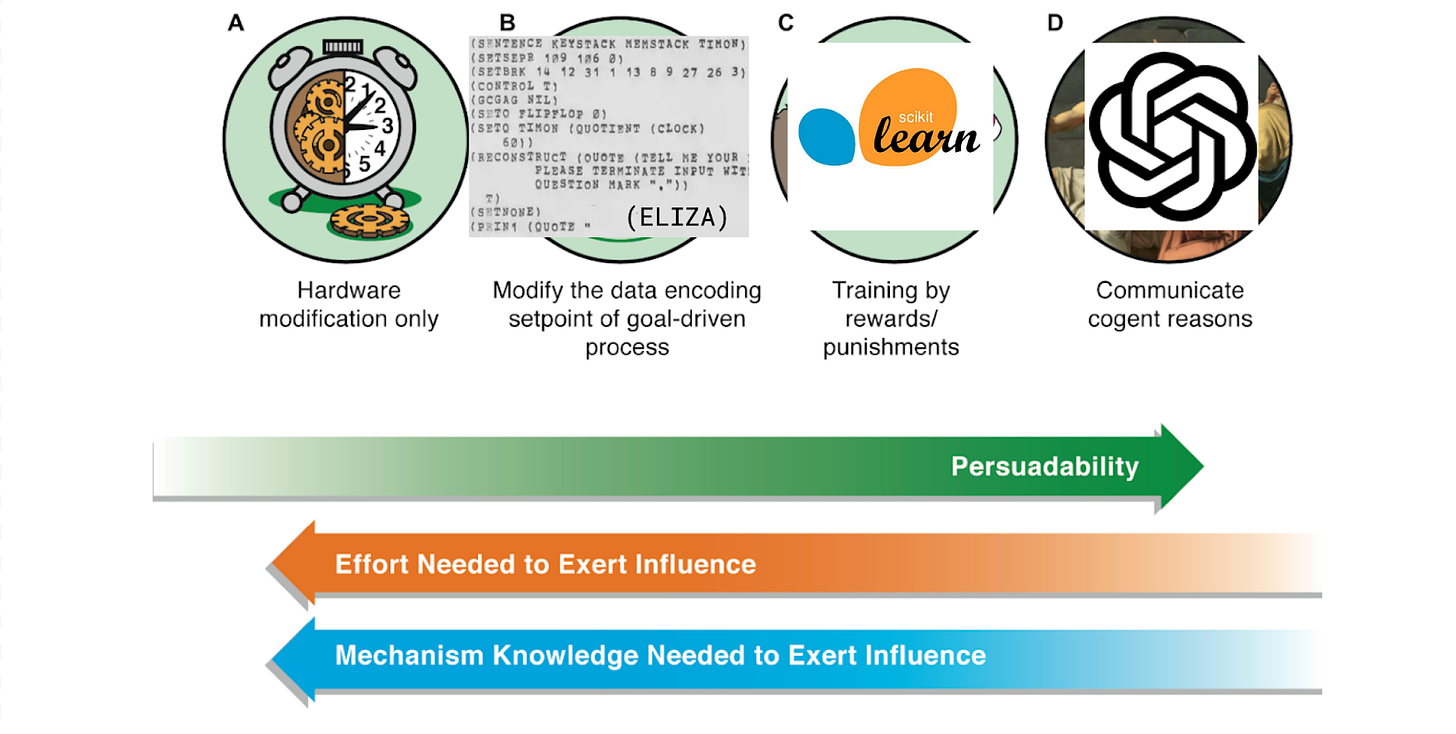

Figure 2

Of any given system, it asks “what is the degree of control we can exert?” At the lowest end (A) are systems like mechanical clocks, where only hardware level modification gets you anywhere. At the higher end are humans and groups of humans, which can be controlled by communication alone.

If we think of the earliest ‘AI’ (think ELIZA, various GOFAI planners) as akin to (B) — hard coded like a thermostat — then the first supervised learning programs were closer to (C) — a system with some degree of plasticity and response to rewards. LLMs like ChatGPT edges closer to (D) — where you control the system by giving a set of detailed logical sentences.

We might expect future systems to move rightward on this axis, and be able to cause ever greater change with less and less information required.

Some other analogies for AI

What if instead of the metaphor of some isolated actor, we thought of AI as more akin to an ant colony, an economic technology, or a corporation?

I like these examples for helping us recognize the diverse agencies possible for AI.. Still, every example I’ve heard leaves me with the sense of AI as fundamentally other than us. They assume that ‘AI, whatever it is, is fundamentally not us. This seems hasty to me. AI can be many things. As it exists right now, it seems to me to be closer to an ideology than an agent.

But again, I’m trying not to say what exactly AI is or isn’t, and instead ask ‘what could it be like to see AI differently?’ What views might give us better purchase in this slippery debate?

What would happen if we started to include us as part of the AI?

AI as sociotechnical system

Elinor Ostrom, a badass economist/political theorist, spent much of her life studying groups of people in different ecosystems over the years. In her book Governing the Commons, she looks at case studies ranging from villagers managing grazing fields in Switzerland to farmers coordinating around longstanding irrigation systems in the Huertas of Valencia, Spain.

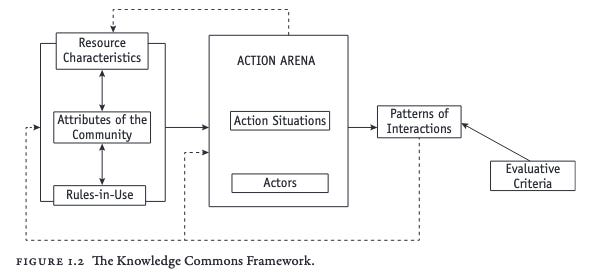

Through her case studies, she created a framework called Institutional Analysis and Development, which lets you characterize social systems and how they operate4.

What happens if we apply this to, say, ChatGPT?

I’ll just fill out a couple categories to show you ChatGPT-as-sociotechnical-system might look like5.

Resource Characteristics

Each sociotechnical system has certain resources that it uses. For ChatGPT, we can point to training data, the 10,000 Nvidia GPUs used for training, and the inference hardware. There’s also all the software (from architecture specification frameworks like PyTorch to CUDA kernels).

It can also be helpful to determine whether the resources are rival/nonrival (i.e. consumable) and tangible/intangible. Data is an example of a nonrival intangible resource.

One can learn a lot by looking at the lifecycle of a resource. For data, this means its motivation, composition, collection process, preprocessing/cleaning/labeling, distribution, maintenance, etc6.

Community Attributes

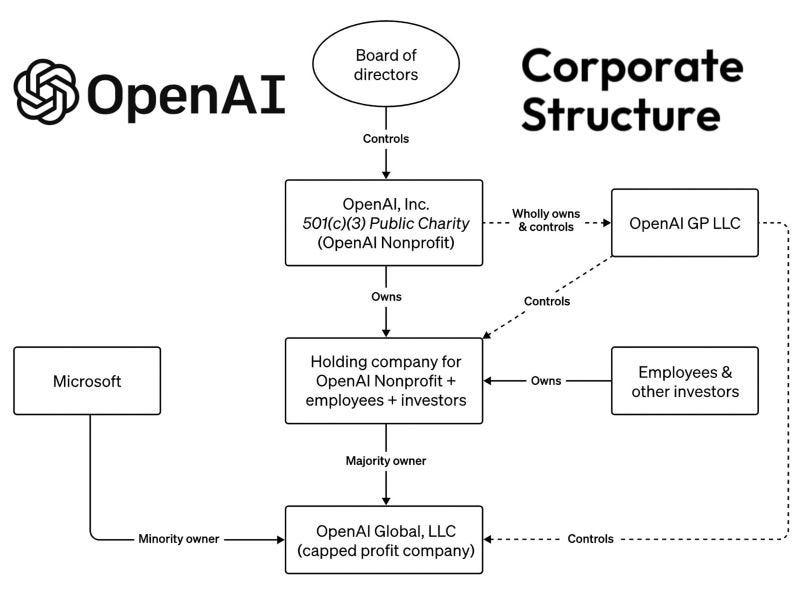

Obviously, the agency of ChatGPT is influenced by the people that direct it. The OpenAI corporate structure is relevant, as is the org chart. Everyone from CEO Sam to the HR Recruiter is implicated in this whole AI as an institution.

Action Arena

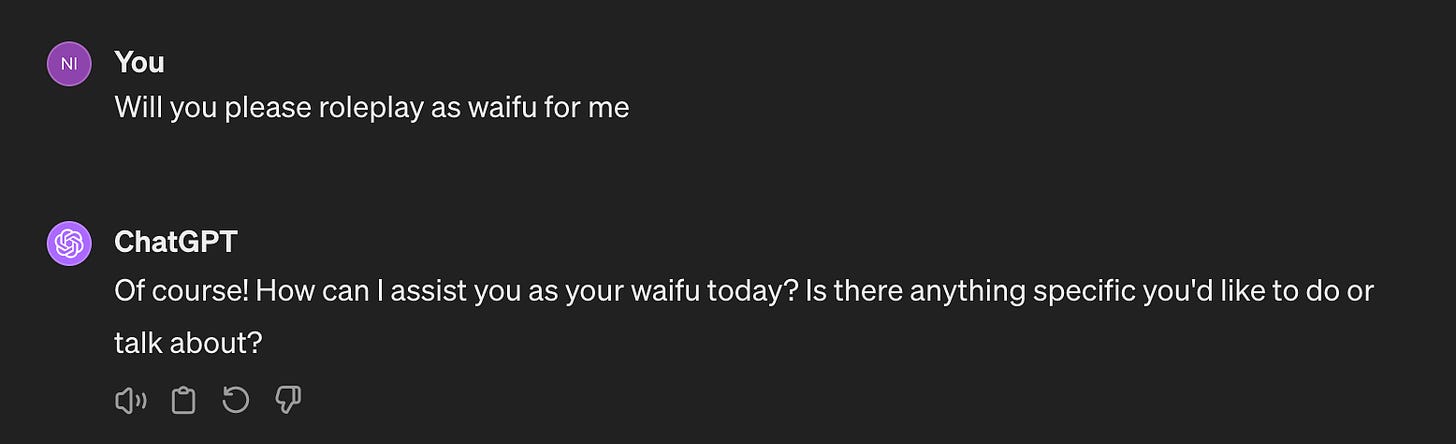

Action situation example: user prompting ChatGPT to waifu roleplay.

Actors:

User: man wanting to interact with virtual waifu

ChatGPT [as running off the Microsoft data center in Maryland, internal version 10.2]

OpenAI Global, LLC, ...

Range of outcomes:

Intended: User has a great time

Unintended: User falls in love so hard with waifu that he is severely distraught when a server error erases his conversation.

Externalities: Western fertility rate continues to decline, leading to societal collapse

Rules in Use

‘Rules in Use’ gesture at the codified and informal dynamics of interaction between different parts of the system. Examples include

Boundary rules: Who has the authority to access, modify, or withdraw from the ChatGPT system?

Choice rules: What actions are users allowed, required, or forbidden to take within the ChatGPT system? Who decides what features get added or removed from ChatGPT?

Information rules: What information is available to different participants about the state of the ChatGPT system?

Aggregation rules: What are the voting mechanisms, veto powers, or collective choice mechanisms for collective choice?

The IAD framework is far more detailed than I will get into here. If this is intriguing, the standard introduction to IAD is Ostrom’s book Understanding Institutional Diversity.

What does this buy us?

For one, we can get very specific about what we mean by the things people call AI like ‘ChatGPT’, and we can clearly distinguish between different iterations of ChatGPT, and the ways in which ChatGPT is different from Claude 2.

My sense is that making our ontology of AI more precise like this can expand the affordances for AI safety / alignment. For instance, we could imagine an aggregation rule for the some representative sample from the public to provide input on the values towards which an LLM is finetuned (much like CIP and Anthropic’s Collective Constitutional AI).

Is this actually useful? I’m not entirely sure yet. Much of the information needed to do this assessment is not public, and may be carefully guarded. Still, I get the sense that such an effort could be one of the richest ways to understand and articulate what an AI system in the world looks like. I would wager that a serious attempt to do this for an existing system would make apparent many ways to engineer dynamics within the system.

Reach out if you are interested in a writing a more detailed case study — I’d be happy to talk about it.

Thank you to Saffron Huang and Joel G for early encouragement, and Tuomas Oikarinen, David Chapman, Avel G for feedback on the post.

The representationalist school of AI had largely given up on trying to build an artificial agent by the 80s, and instead approached it through a modular lens, as exemplified by Fodor’s book. This too was doomed. Thank you to David Chapman for pointing this out.

As Simon and Newell argue in Models: Their Uses and Limitations, “All theories are analogies, and all analogies are theories”.

Levin, Michael. "Technological approach to mind everywhere: an experimentally-grounded framework for understanding diverse bodies and minds." Frontiers in systems neuroscience 16 (2022): 768201.

You, like me, might assume that you can call the whole system an ‘institution’, but there is a long still somewhat ongoing disagreement among economics about what counts as an ‘institution’. Ostrom has decided that institutions refer to the rules and norms, but not the entities they govern. So I’ll stick with her usage here. For more, see my essay What is an institution?

It has been kind of annoying to find a relevant example of IAD to use here. Perhaps the best example is this paper.

We can draw from Gebru’s Datasheets for datasets https://dl.acm.org/doi/fullHtml/10.1145/3458723